Why Finance Teams Confuse AI and Automation

The conflation is understandable. Both technologies reduce manual work. Both operate in the background once deployed. Both are sold by vendors using nearly identical language.

But the underlying mechanics are completely different, and those mechanics determine where each one adds value and where each one creates risk.

Automation executes. It follows a rule you've defined. If the rule is right, the output is right, every time, at scale. It doesn't learn. It doesn't adapt. It doesn't guess. That's the feature, not the limitation.

AI interprets. It synthesizes inputs, detects patterns, and generates probabilistic output. It can operate on data that no explicit rule could cleanly handle. But because its output is probabilistic, it requires human review, especially in high-stakes financial contexts.

The teams that confuse these two approaches typically land in one of two failure modes:

-

They automate a process that required judgment. The system executes flawlessly and produces a wrong answer no one catches until a material variance shows up in a board presentation.

-

They deploy AI on a process that required governance. The insight is interesting. But when the auditors ask for the methodology, no one can explain it. The output is real. The audit trail isn't.

Both failures are preventable. The prevention starts with a clear-eyed categorization of your processes.

The Right Question Before Any Deployment

Before you classify a process as an automation candidate or an AI use case, ask two questions:

Question 1: Can I write the rule?

If you can fully articulate the logic, every condition, every exception, and every edge case in plain language, the rule exists: automation can follow it.

If the logic requires judgment, pattern recognition, or interpretation of context that changes, then you're in AI territory.

Question 2: How high is the auditability requirement?

If the output of this process will be reviewed by an auditor, presented to a board, or used to make a capital allocation decision, then auditability is high. That means any AI involvement requires documented assumptions, defined confidence thresholds, and mandatory human review before the output is treated as authoritative.

Low auditability contexts like internal analysis, early-stage scenario work, and hypothesis generation allow for more AI-forward workflows with lighter governance.

The quadrant that requires the most attention is low rule certainty combined with high auditability. These are your highest-risk deployments. They need the most governance investment, and they're where the most expensive mistakes happen.

The quadrant that requires the most attention is low rule certainty combined with high auditability. These are your highest-risk deployments. They need the most governance investment, and they're where the most expensive mistakes happen.

Where Finance Automation Creates the Most Value

Finance automation is most powerful when it eliminates the execution burden from processes your team has already figured out. These are the workflows where the rule is known, the data source is defined, and consistency is more valuable than creativity.

High-value automation targets in FP&A:

-

Recurring journal entries and period-end accruals

-

Intercompany eliminations with defined consolidation logic

-

Variance alerts triggered at defined thresholds

-

Report distribution and scheduling

-

Data validation checks against known parameters

-

Balance sheet reconciliation against a defined source of record

A useful heuristic: if a junior analyst does this task the same way every month and could document the steps in under ten minutes, it belongs in automation.

The risk of under-automating here isn't just inefficiency. It's that manual execution of rule-based processes introduces the kind of human variability that creates reconciliation errors, delayed closes, and the fire-drill energy that consumes finance teams at exactly the wrong moments.

The risk of over-automating, deploying automation on a process the team hasn't fully codified, is subtler and more dangerous. You're not automating a process. You're automating your current understanding of a process, complete with its gaps and assumptions.

Document the rule completely before you automate it. Every time.

Where AI for FP&A Creates the Most Value

AI earns its place in finance where the data is complex, the question is novel, or the pattern is too large for a human analyst to detect unaided.

High-value AI use cases in FP&A:

-

Natural language querying of financial data across multiple systems

-

Anomaly detection across large transaction sets, surfacing outliers no rule would catch

-

Scenario narrative generation and executive commentary drafting

-

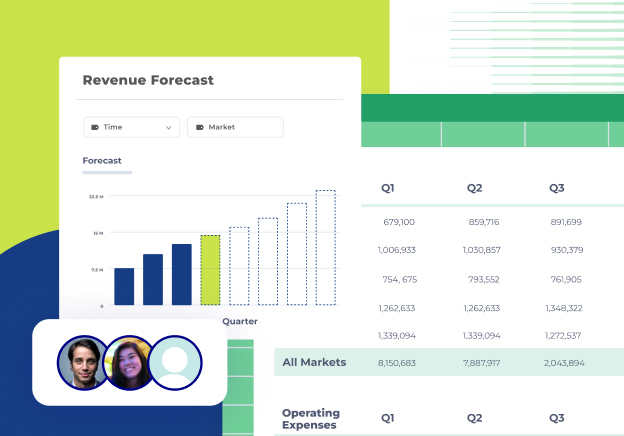

Synthesizing forecast inputs from sales, marketing, and operations into a coherent financial model

-

Predictive analysis on non-linear historical data

A useful heuristic for AI: if a senior analyst would need to genuinely think to answer this, and the output needs human review before it goes to leadership, that's an AI problem worth solving.

But there's a condition attached. AI in FP&A is only as trustworthy as the data it operates on. An AI model running on inconsistent, siloed financial data doesn't produce better insight than a spreadsheet. It produces faster-looking wrong answers, which is worse.

This is the governance reality that most AI-in-finance conversations don't address directly: AI doesn't make data trustworthy. The data has to be trustworthy first. The intelligence layer has to sit on top of a foundation that's already auditable, already connected, already reconciled.

The FP&A Automation Strategy Most Teams Are Missing

The highest-functioning finance teams aren't asking "should we use AI or automation?" They're asking a more precise question: "What does our data foundation look like — and what can we trust to run on top of it?"

Both automation and AI break down in the presence of disconnected, ungoverned data. Automation amplifies errors that exist in the source. AI learns from patterns that may be artifacts of bad data hygiene, not real business signals.

The implementation sequence that works:

-

Step 1: Establish a single source of truth. Every FP&A tool, every data source, every workflow is connected, reconciled, governed. This isn't a technology project. It's a data strategy.

-

Step 2: Automate the known. With a clean data foundation, automate every rule-based, repetitive process. Eliminate the manual execution burden that consumes 80% of your team's time.

-

Step 3: Layer AI on top. Once your data is trustworthy and your baseline processes run without intervention, AI can generate insight, surface anomalies, and accelerate the analysis work that actually moves the business.

Teams that try to run Step 3 before completing Step 1 get impressive demos and disappointing results.

What to Do Next

If you're evaluating where AI vs. automation fits in your finance stack right now, here are the practical actions that move the needle:

-

Audit your existing automations. For each one: does anyone still know what rule it's following? Has the business process changed since it was built? Is anyone reviewing the output or has it become assumed infrastructure?

-

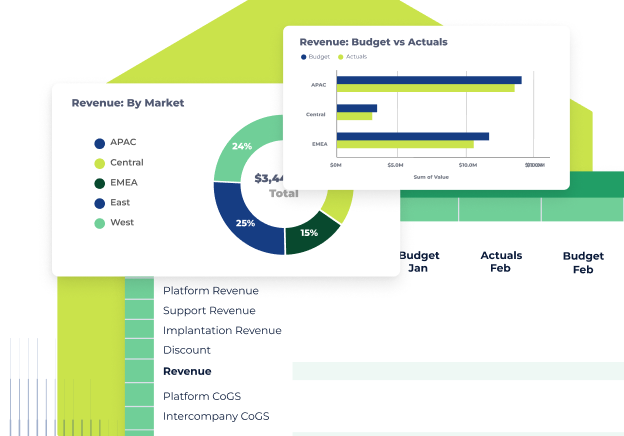

Categorize your AI candidates using the two-axis framework. Rule certainty on one axis. Auditability requirement on the other. Every process you're considering for AI investment should land somewhere on the matrix before you approve budget.

-

Define human sign-off requirements before deployment. For every AI output that will reach leadership or a board, name the person who reviews and owns it before it goes. Build that step into the workflow, not as an afterthought.

-

Assess your data foundation honestly. Before the next AI or automation initiative goes live: is there a single, trusted source of financial data underneath it? If the answer is uncertain, that's the investment to make first.

-

Train your team on the distinction. The CFO owns this framework. But the analysts deploying tools day-to-day need to understand it too. A 30-minute working session with your team on "when to reach for which tool" pays dividends for every initiative that follows.

Conclusion

The question isn't whether to use AI or automation in your finance team. It's whether you're using each one for the problem it was actually built to solve.

Automation delivers consistency at scale in processes you've already mastered. AI delivers insight in territory you haven't mapped yet. Both require trustworthy data underneath them. And both require a human being who owns the output, not just the tool.

The finance teams pulling ahead right now aren't the ones with the most sophisticated tools. They're the ones who've built the data foundation that makes every tool perform the way the vendor promised.

That foundation is the work worth doing first.

Cube helps finance teams build that foundation, a single source of truth that connects every FP&A tool and workflow so that when you deploy automation or AI, it runs on data you can actually trust. See how it works →

.png)

.png)